Made of Stardust

2023

Every atom of iron in your blood was forged in a dying star.

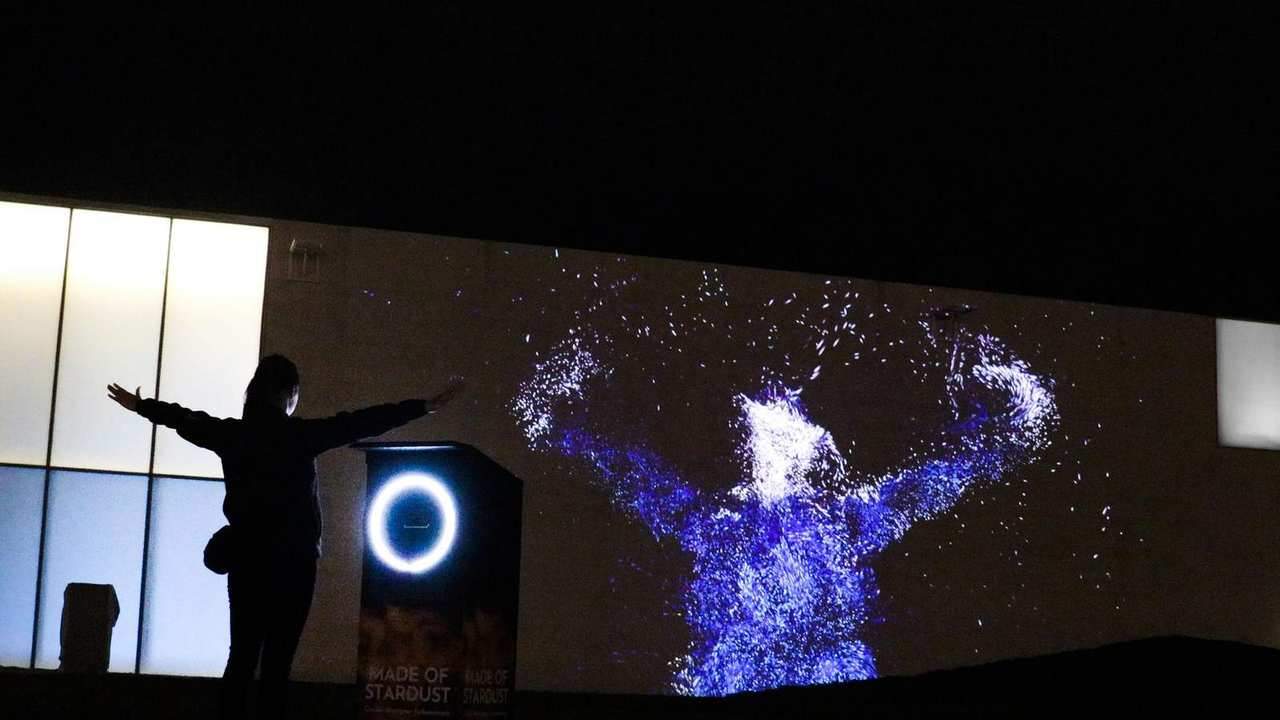

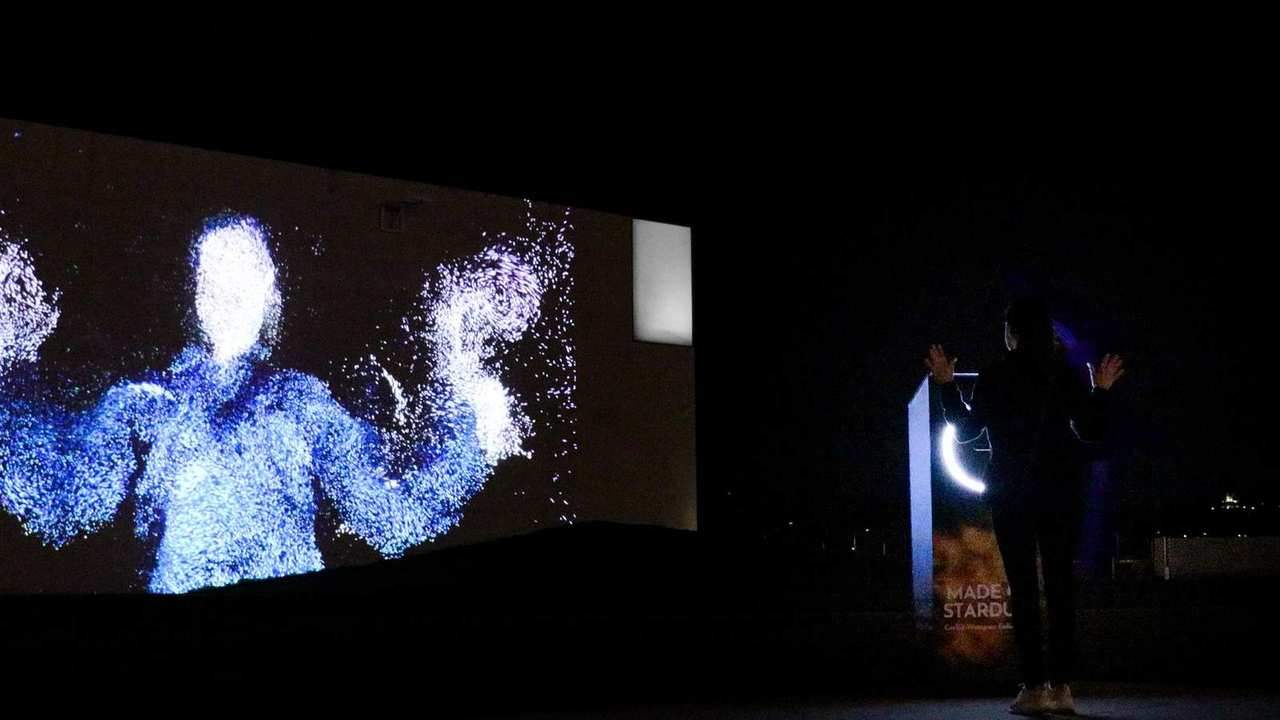

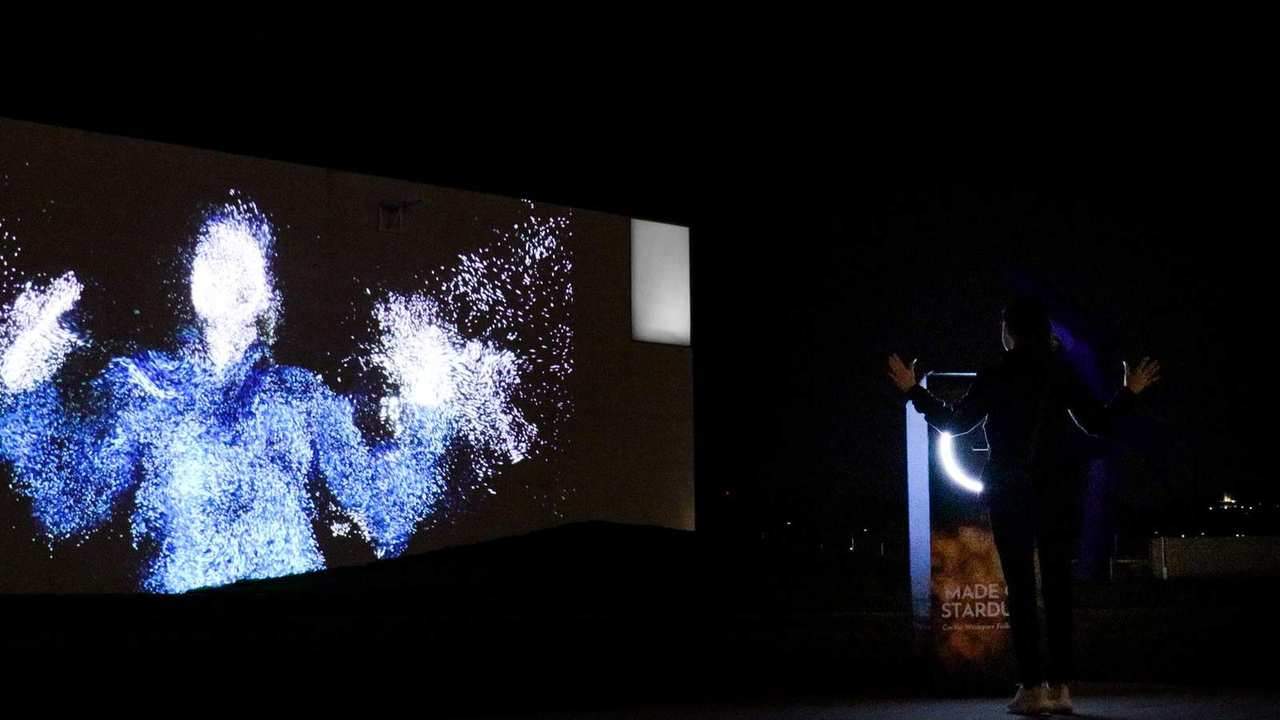

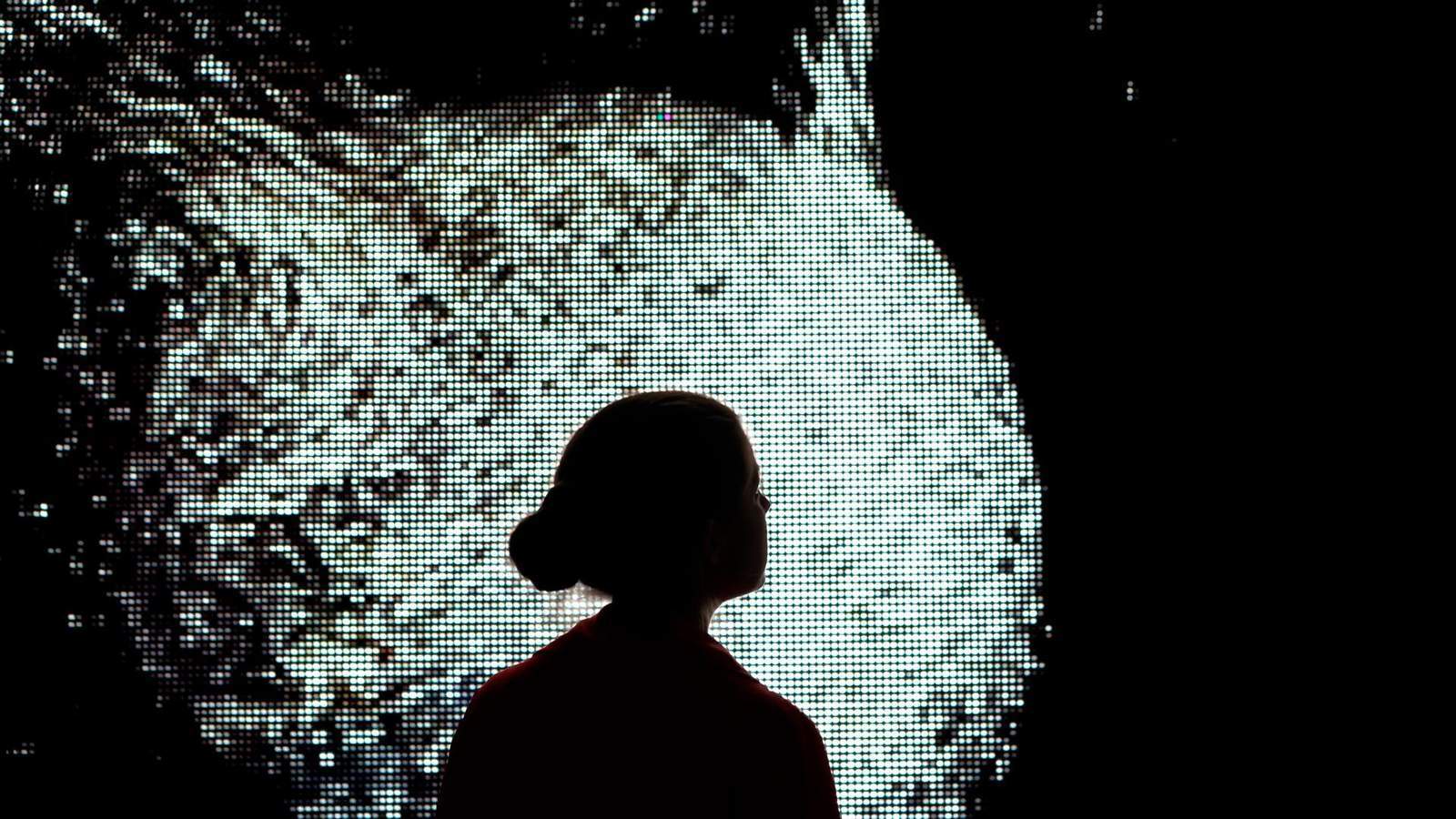

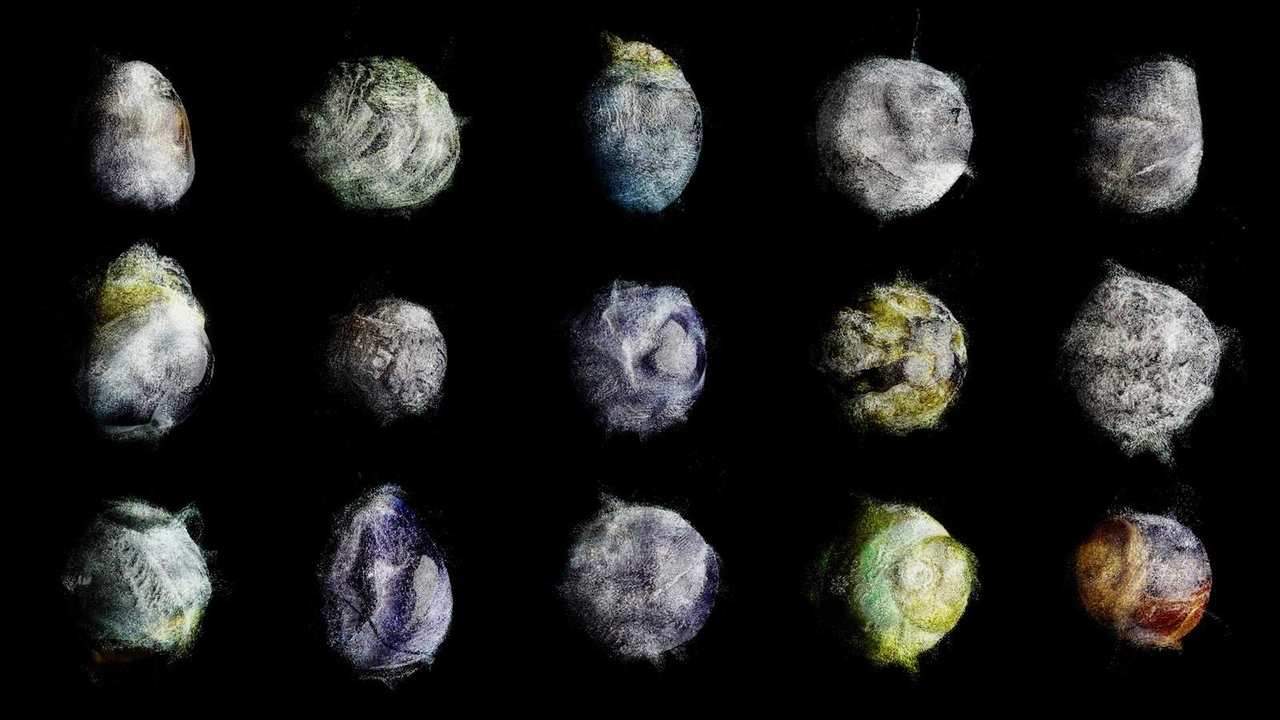

Made of Stardust transforms visitors’ bodies into avatars made of thousands of shining particle points. A direct, physical connection between the person standing in the room and the astrophysical matter they are made of. Participants step in front of a camera and watch themselves dissolve into a cloud of stardust that moves with them in real time.

We built the system in Unity using an Intel RealSense depth camera. A point cloud component converts the live depth stream into two dynamically animated attribute maps position and colour which drive a Unity Visual Effect Graph. The particle system reads these maps the same way it reads static point cache files, but at live camera frame rate, permanently tethering the particle field to whoever is standing in front of it.

Presence detection uses a fraction-of-pixels threshold rather than skeleton tracking: the system checks whether enough depth pixels fall within a registration distance to count as a person, running the test every nth frame to avoid accumulating perceptible delay. When a visitor enters, the system transitions from the ambient artwork video into the particle body. When they leave, a transition video bridges the cut before the loop resumes. Enter and leave delays are configured independently to tune the sensitivity of each threshold crossing.

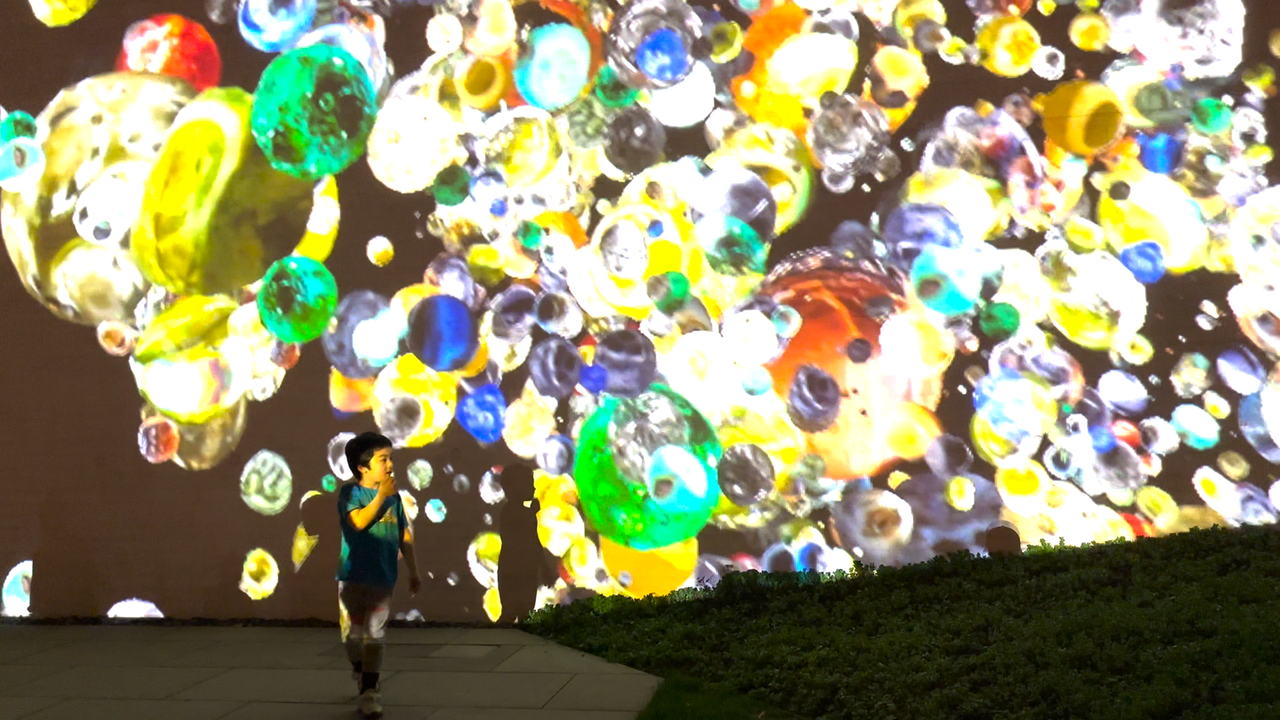

Developed in collaboration with researcher Arka Sarangi of the DARK research centre at the Niels Bohr Institute. Supported by the Novo Nordisk Foundation. Shown at Copenhagen Contemporary (2023) and the Kennedy Center for the Performing Arts, Washington D.C. (2023, 2025).

Tech

Venue

Kennedy Center, Washington D.C. / Copenhagen Contemporary

2023 – 2025

Team

Gallery