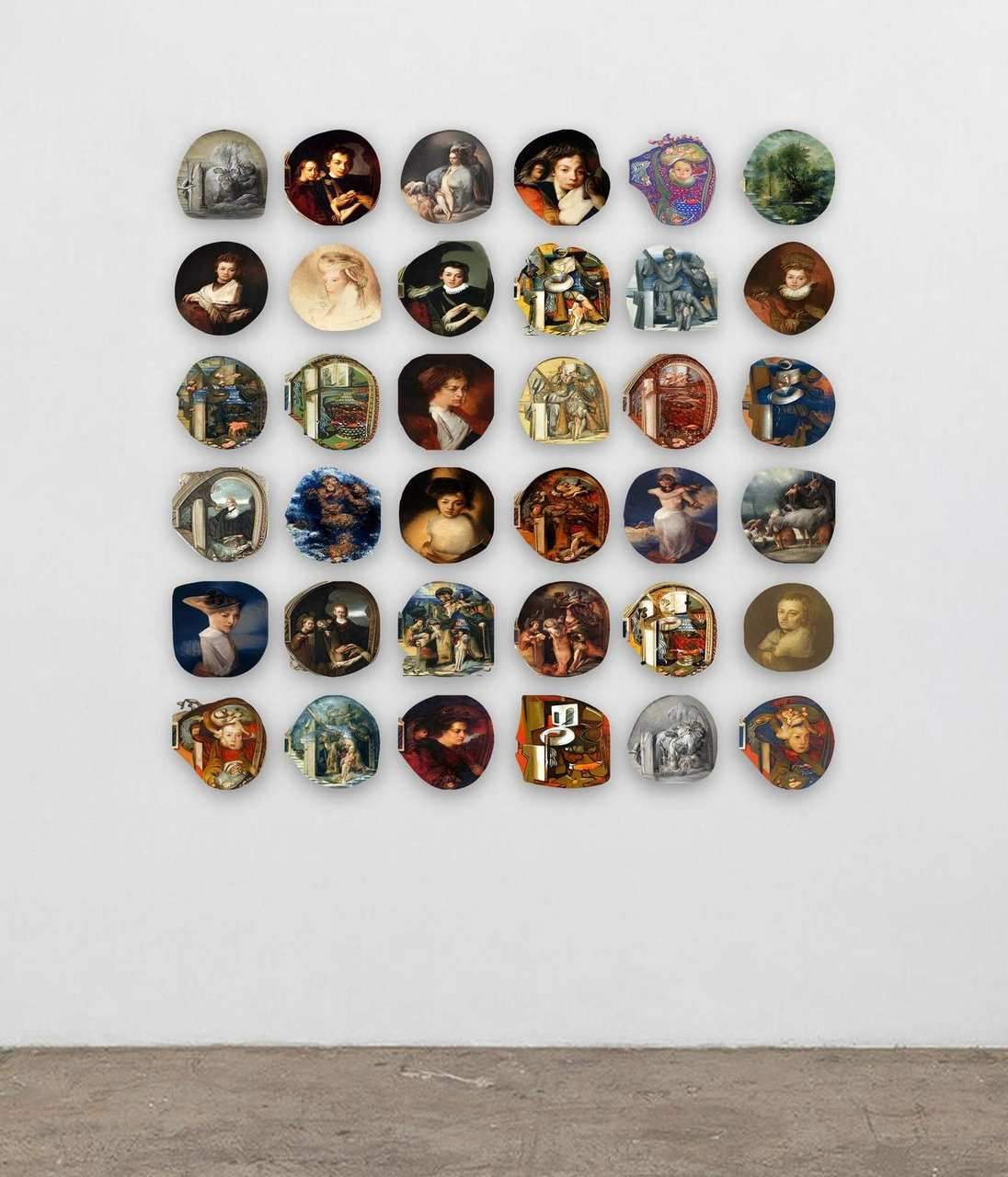

Perpetual Dreaming Machine

2021

A neural network set loose in eight centuries of painting, dreaming its way from one canvas to the next.

Perpetual Dreaming Machine is a generative video installation in which a neural network moves continuously through a latent space trained on over 80,000 paintings from western art history, spanning the 14th to the 20th century. Visitors watch the machine’s output evolve in real time. Not reproducing any single artwork, but interpolating between learned representations of style, composition, and form across centuries.

We built the generative system on a VQGAN+CLIP pipeline combined with guided diffusion. CLIP steers the generation by measuring the distance between the current image and a target embedding in a shared image-text space; VQGAN provides the image synthesis model; guided diffusion refines the output across iterative steps. The system runs as a containerised Python service, served over an API with WebSocket support so that output can be streamed to display clients in real time. Perlin noise is used to introduce smooth variation into the generation process over time, preventing the system from settling.

The training corpus (80,000 paintings) was assembled and used to condition the model’s understanding of the visual vocabulary of historical western art. The result is a machine that has developed its own internal representation of what that corpus looks and feels like, and expresses it in a continuous generative loop.

Tech

Venue

Various

2021

Team

Gallery